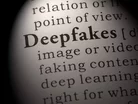

Deepfakes to become a growing trend in 2022 says IntSights

Deepfake technology has been making headlines in recent months. Cybersecurity companies believe that deepfakes are set to grow in popularity in 2022 and are something we need to look out for.

What are Deepfakes?

Deepfakes are synthetic media in which a person in an existing image or video is replaced with someone else's likeness. While the act of faking content is not new, deepfakes leverage powerful techniques from machine learning and artificial intelligence to manipulate or generate visual and audio content with a high potential to deceive. The main machine learning methods used to create deepfakes are based on deep learning and involve training generative neural network architectures, such as autoencoders or generative adversarial networks.

Deepfakes have garnered widespread attention for their uses in celebrity pornographic videos, revenge porn, fake news, hoaxes, and financial fraud. This has elicited responses from both industry and government to detect and limit their use.

Future Deepfakes trend

Alon Arvatz, Senior Director of Product Management at IntSights, a Rapid7 Company, says deepfakes are a trend we all need to be wary of in the future.

“Deepfakes are not yet a trend, and so far, we haven’t seen a lot of attacks leveraging deepfakes as a method. However, the technique is something that is emerging and we’re starting to see signs that suggest it will be a trend to be wary of in the future.

"Using artificial intelligence, cybercriminals or fraudsters use deepfake technology to either impersonate the face or voice, or both, of a person in order to carry out scams, fraud and social engineering attacks.

"Based on the hacker chatter that we track on the dark web, we’ve seen traffic around deepfake attacks increase by 43% since 2019. Based on this, we can definitely expect hacker interest in deepfake technology to rise and will inevitably see deepfake attacks becoming a more utilised method for hackers in 2022.

"Furthermore, like many other cyberattack methods, we predict that threat actors will look to monetise the use of deepfakes by starting to offer deep-fake-as-a-service, providing less skilled or knowledgeable hackers with the tools to leverage these attacks through just the click of a button and a small payment," concludes Arvatz.

According to Wikopedia, Deepfakes are being used across many sectors from politics to acting but are also being exploited and used for blackmail and fraud.

Audio deepfakes have been used as part of social engineering scams, fooling people into thinking they are receiving instructions from a trusted individual. In 2019, a UK-based energy firm's CEO was scammed over the phone when he was ordered to transfer €220,000 into a Hungarian bank account by an individual who used audio deepfake technology to impersonate the voice of the firm's parent company's chief executive.

Deepfakes can be used to generate blackmail materials that falsely incriminate a victim. However, since the fakes cannot reliably be distinguished from genuine materials, victims of actual blackmail can now claim that the true artifacts are fakes, granting them plausible deniability. The effect is to void credibility of existing blackmail materials, which erases loyalty to blackmailers and destroys the blackmailer's control. This phenomenon can be termed "blackmail inflation", since it "devalues" real blackmail, rendering it worthless. It is possible to repurpose commodity cryptocurrency mining hardware with a small software program to generate this blackmail content for any number of subjects in huge quantities, driving up the supply of fake blackmail content limitlessly and in highly scalable fashion. A report by the American Congressional Research Service warned that deepfakes could be used to blackmail elected officials or those with access to classified information for espionage or influence purposes.

- Fable & Mythos 5: Anthropic's Mythos Class Models ExplainedTechnology & AI

- Top 10: Security Information and Event Management PlatformsCyber Security

- Recorded Future & Wipro Boost Enterprise Threat IntelligenceCyber Security

- CrowdStrike Counts on Dr Bartley for Cyber SuperintelligenceTechnology & AI